LoweBot | An autonomous customer service and inventory management Robot (~2014)

LoweBot, OSHbot and NAVii are autonomous robots that were deployed across multiple retailers in the US and Japan, including Lowe's Home Improvement stores, BevMo, SAM's, Yamada Denki, Parco, and other industrial setups like warehouses and factories. They are autonomous customer service robots that help customers find items in stores, and scan inventory using computer vision overnight.

Building a Personal AI Workstation with 4x NVIDIA RTX 6000 Pro Blackwell GPUs (2025)

I built a personal workstation with 4x of the RTX 6000 Pro Blackwell MaxQ (384GB total VRAM), designed originally as an AI Workstation that you can keep under your desk, with a standard 15amp, 120V power outlet.

Our total BOM cost of this workstation is ~$39.5K.

Jensen Huang (Nvidia founder & CEO) signed the first one I built:

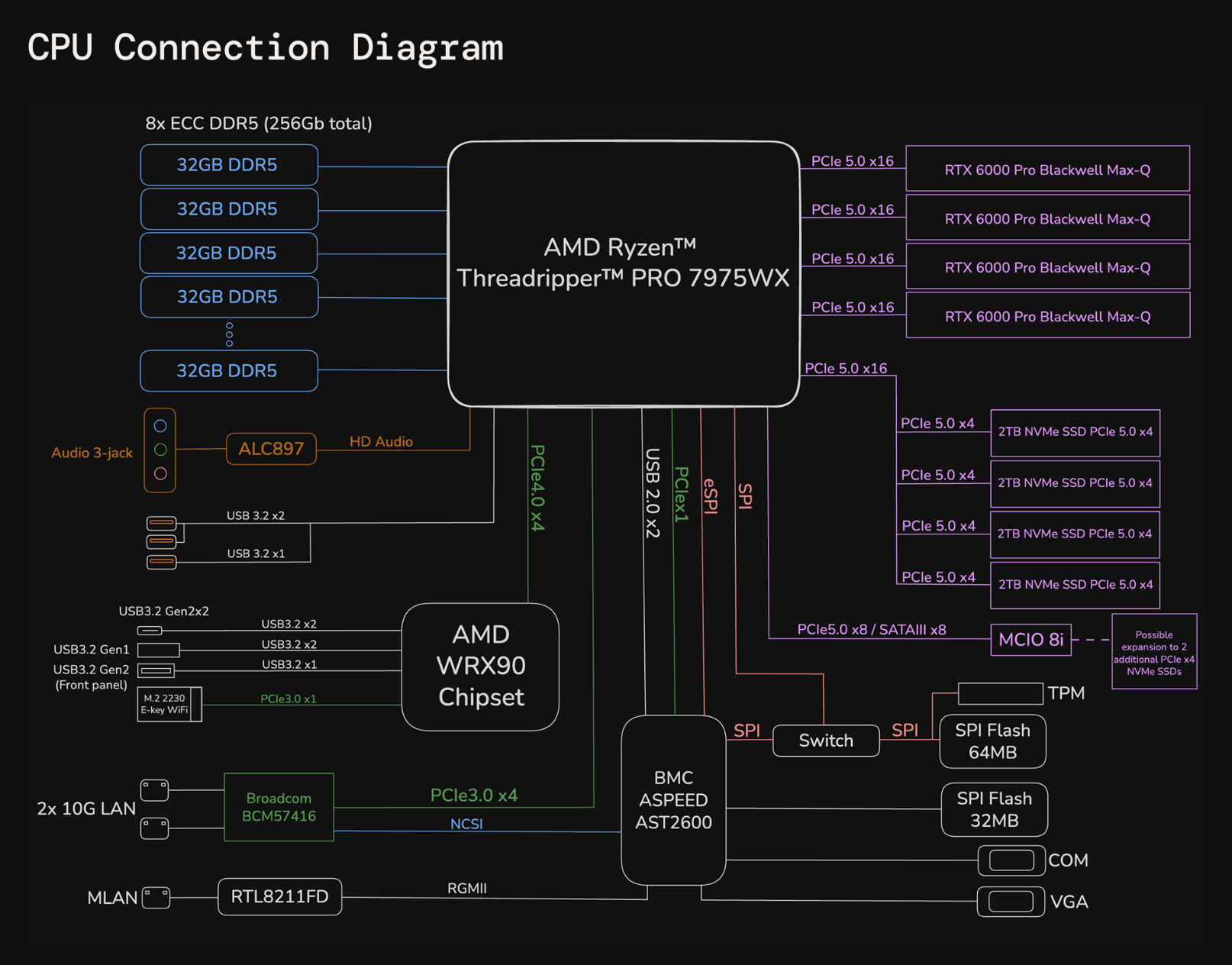

Parts:

- 4x NVIDIA RTX 6000 PRO Blackwell Max-Q, 384GB total GRDDR7 VRAM (96GB per GPU), all cards running on PCIe 5.0 x16 lanes.

- 8TB of NVMe PCIe 5.0 storage RAID 0, 59.6GB/s aggregate theoretical read throughput. With NVIDIA GPUDirect Storage (GDS), it allows the GPUs to fetch data directly from NVMe drives, enabling direct-memory access (DMA), skipping the DDR5 RAM.

- AMD Threadripper PRO 7975WX (32 cores, 64 threads)

- 256GB ECC DDR5 RAM

- 1650 Watts at peak (runs on a standard 15Amp/120V circuit).

The RTX 6000 PRO Blackwell Max-Q delivers 24,064 CUDA cores, 96GB of GDDR7 VRAM memory, PCIe 5.0 x16, and next-gen Blackwell architecture in a remarkably efficient 300W powerhouse.

The liquid-cooled AMD Threadripper PRO 7975WX features 32 cores and 64 threads on 5nm Zen 4 (Storm Peak), with DDR5, 128 PCIe 5.0 lanes, 128 MB L3 cache, and clock speeds up to 5.3 GHz boost / 4.0 GHz base.

The workstation also integrates an AST2600 Baseboard Management Controller (BMC), a dedicated processor for remote out-of-band management that operates independently of the host CPU and OS to handle critical monitoring and control tasks:

This was built for founders of a16z portfolio companies. The full guide on how to build one can be found here.

AI GPU Workstation with 8x 4090/5090 GPUs with PCIe5.0 16x lanes (2025)

I built a couple of GPU workstations with the RTX GPUs. The RTX 4090 RTX 5090 are absolute beasts. With 24GB of VRAM and 16,384 CUDA cores on the RTX 4090, and 32GB of VRAM and 21,760 CUDA cores on the RTX 5090, both deliver exceptional FP16/BF16 and tensor performance for their cost.

The RTX 3090s were the last RTX GPUs that had NVLink, and since the 4090s, there's no NVLink interconnect in the GPUs, which is crucial for high memory bandwidth when training models. This means that PCIe connectivity and utilizing the latest PCIe version (4.0 or 5.0, respectively) with 16x lanes is key to building an AI workstation to maximize bandwidth between the cards.

As an experiment and for research purposes, I built two nodes of 8x RTX 4090 GPU AI workstations from scratch, which could be compatible with the new RTX 5090 with PCIe 5.0 running at 16x lanes, for training, deploying, and running AI models locally.

The parts used per workstation:

- Server model: ASUS ESC8000A-E12P

- GPUs: 8x NVIDIA RTX 4090

- CPU: 2x AMD EPYC 9254 Processor (24-core, 2.90GHz, 128MB Cache)

- RAM: 24x 16GB PC5-38400 4800MHz DDR5 ECC RDIMM (384GB total)

- Storage: 1.92TB Micron 7450 PRO Series M.2 PCIe 4.0 x4 NVMe SSD (110mm)

- Operating system: Ubuntu Linux 22.04 LTS Server Edition (64-bit)

- Networking: 2 x 10GbE LAN ports (RJ45, X710-AT2), one utilized at 10Gb. You can replace one of these (or add) a Mellanox card for faster interconnect speeds.

- Additional PCIe 5.0 card: ASUS 90SC0M60-M0XBN0

This was built only for fun and educational purposes. The full guide can be found here.

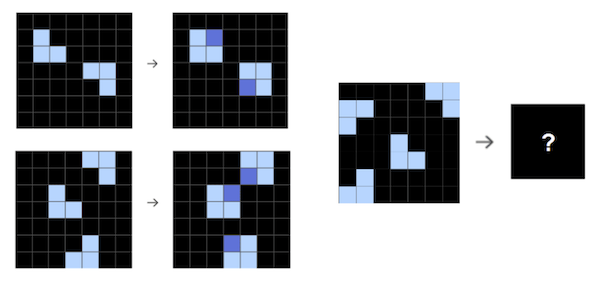

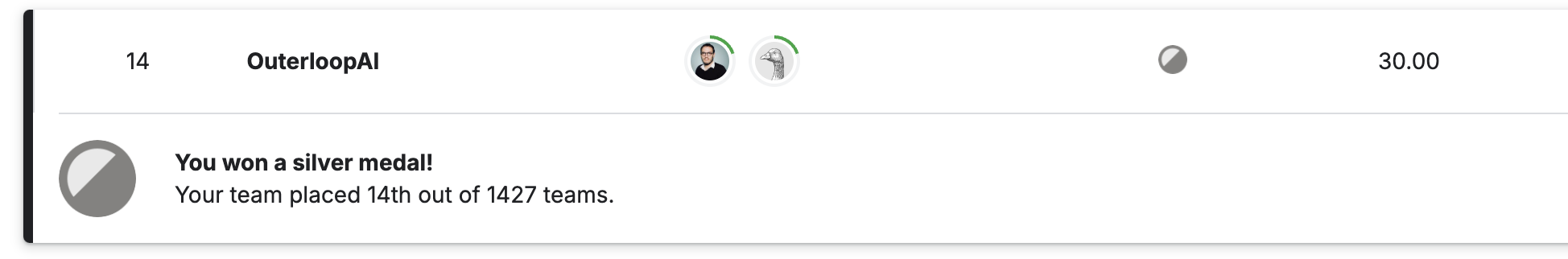

Silver medalist, 2024 ARC-AGI Prize, 14th place, top 1% (Dec 2024)

I worked for ~2.5 weeks on making a submission with a custom set of trained models to the ARC-AGI challenge competition on Kaggle to see how far we could push AI models on this benchmark.

Currently, in position 14th out of 1427 teams, which got a silver medal on Kaggle for placing in the top 1% of all competition participants. I plan to keep working on this (still mostly for fun), but training these models can get a bit pricey.

The prized competition has some requirements: the model(s) need to fit in a single P100 GPU (16Gb) or in two T4s (16Gb each), and a maximum running time of 12 hours for all the tasks and the solution needs to be completely offline, which means no GPT4, Claude, API calls, etc. So it only leaves you with a few options of pre-trained models you could use.

Some approaches:

- Inference-time search

- Extended test-time compute

- Data augmentation and synthetic data of known puzzles (transduction)

- CoT traces on multiple solutions (positive and negative) with grids (transduction)

- CoT traces on multiple solutions (positive and negative) with code synthesis (induction)

- Long training runs over synthetic data

- Multiple models for overall solution (induction and transduction)

- Active inference (train model on the fly)

- Used Llama3 and Qwen models

JungleGym AI | A playground for testing and developing autonomous agents with AI models (2023)

JungleGym is an open source playground for testing and developing autonomous agents with different agent datasets and benchmarks, like WebArena, Mind2Web and AgentInstruct. The playground has realistic, fully functional, sandboxed web sites, benchmarks and an API, all in a single environment.

Llama2.ai | The first llama2 chatbot interface (2023)

I built and deployed Llama2.ai, the first AI chatbot interface for the Llama 2 models, which became the most popular interface when Mark Zuckerberg announced them. Reached over 3,000 concurrent requests. The system was built on 100 load-balanced servers and hundreds of GPUs (H100s). (Llama2.ai is now run by Replicate)

AnyPod.ai | Semantic Search Engine for Youtube/Podcasts (2022)

A weekend project to search for phrases, ideas or semantic questions on YouTube/Podcasts. Used Whisper, an embedding model (all-mpnet-base-v2) with Sentence Transformers and FAISS to return top k 20 results from multiple simultaneous YouTube channels.

arXivGPT | Twitter bot that posts the most relevant/trending AI papers on arXiv.org (2023)

I built arXivGPT originally for myself while trying to filter through and read the most relevant AI papers on arXiv.org, as there was a lot of noise. ArXivGPT creates summaries of trending AI papers on arXiv (using a set of heuristics), listing the most important points and authors.

YouTranscription | Automated Whisper transcripts from YouTube channels (2022)

A weekend project to automate transcriptions of YouTube videos or channels into searchable transcripts.

SIMPL (2016)

I trained a custom AI model to allow an easy way to program industrial robot arms with no coding required, only by drawing bounding boxes over objects in an easy-to-use interface. It uses a custom trained single-shot CNN trained on thousands of objects running locally.

AI model for weapon detection (2022)

I trained an AI model to detect weapons from low-resolution security cameras running in real-time (~25fps) in constrained compute (optimized model for local hardware) and send SMS alerts.

Covid-19 Ventilator (2020)

I built an open-source Covid ventilator due to the shortage of ventilators in 2020, which helped over 200 teams around the world building and deploying ventilators. The first version of this was running on a Raspberry Pi. Later versions used a custom PCB with an ARM chip.

Covid Contact Tracing by precise proximity with Ultra-wideband (2020)

As many of us were impacted with Covid, and we were trying to help the community at the same time during that difficult time, we created a Covid contact tracing device using precise distance measurement using Ultra-wideband (UWB). The contact tracer allows people to socially distance (via haptic feedback) and keep track of every contact within 10 feet precisely.

Vision-based, autonomous wheelchair robot (2011)

I developed an autonomous wheelchair robot (KuruRobo) for people with disabilities, using VSLAM, while I was doing computer vision research at the Kanazawa Institute of Japan.

Other random ones in Japan